Overview

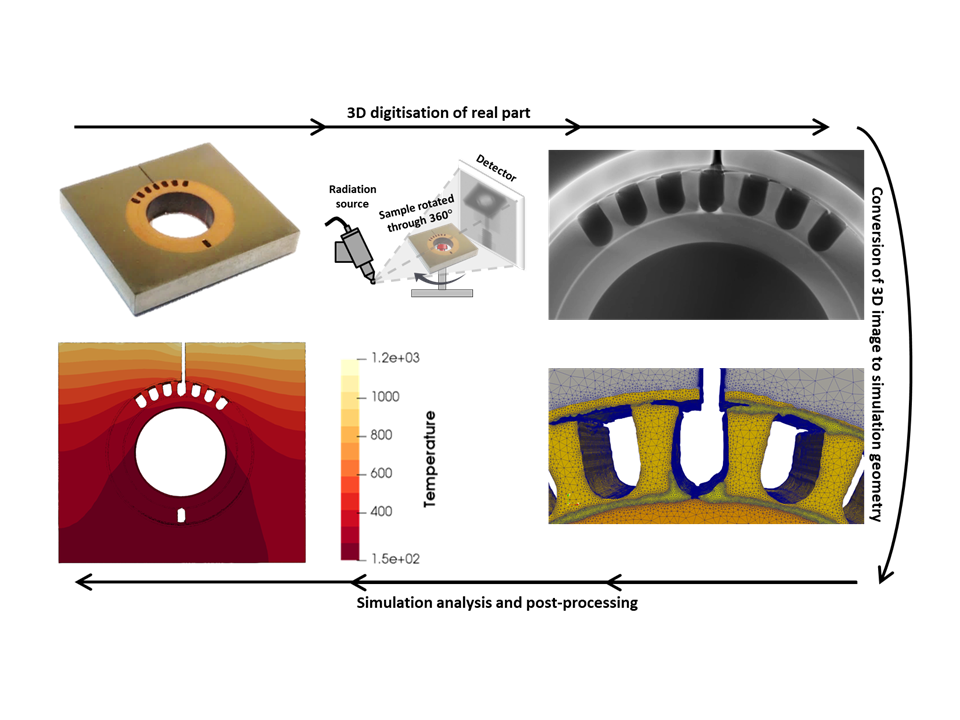

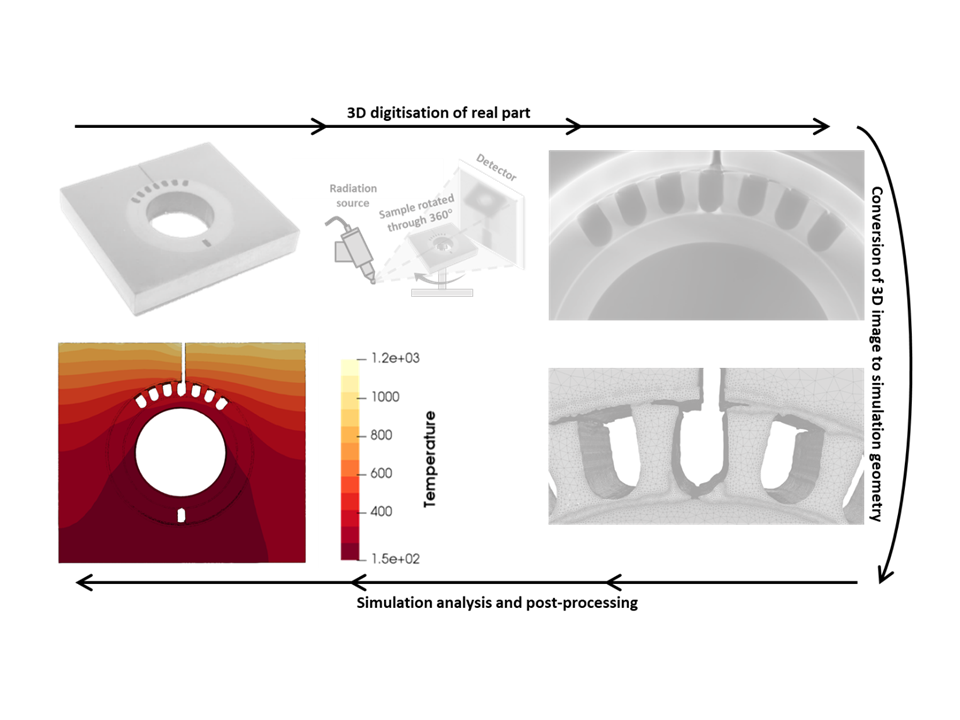

The IBSim method can be broadly divided into four main stages:

- Digitisation of parts through a volumetric imaging technique

- Conversion of the volumetric image into virtual geometry

- Preparation of the geometry into a simulation ready format

- Image-Based Simulation analysis, visualisation, and post-processing

Digitisation of parts through volumetric imaging

Techniques for producing a volumetric image can range from a topological scan to full 3D mapping.

3D scanning techniques for producing a volumetric image can range from a topological scan using methods such as:

To full 3D mapping with techniques like:

- Computed Tomography (CT)

- Magnetic Resonant Imaging (MRI)

- Confocal Microscopy

- Optical Serial Sectioning Microscopy (OSSM)

- Focussed Ion Beam Cutting with Scanning Electron Microscopy (FIB-SEM)

Each technique has its own strengths and limitations. The resolution of the IBSim geometry will inevitably depend on the resolution of the image on which it is based. It is, therefore, essential to select the most appropriate technique and acquire the best resolution feasible for the given circumstances. Although some corrections may be applied with image-processing methods, they are no replacement for following best practices for the imaging technique of choice.

Conversion of volumetric image into virtual model

Once 3D data has been acquired it must be converted into a virtual representation of the geometry ready for simulation.

Topological scans, e.g. from laser scanning, are the simplest forms of imaging data to create IBSim models. Because they only capture the external geometry with no internal features (e.g. micro-pores or inclusions), they are relatively small datasets (but still significantly larger than CAD-based geometries).

These will often be formed of point clouds, which are a collection of cartesian coordinates representing the sample surface. Techniques like photogrammetry can provide additional information such as colour which facilitates distinguishing between materials in multi-phase samples.

Post-processing methods are used to interpolate between the points and define surfaces. Smoothing algorithms are often used to ‘clean’ data and remove spurious points or fill in voids in data.

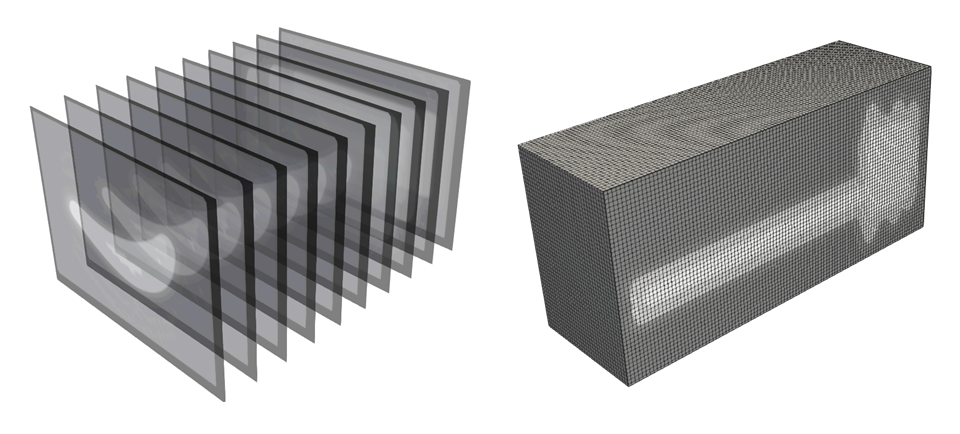

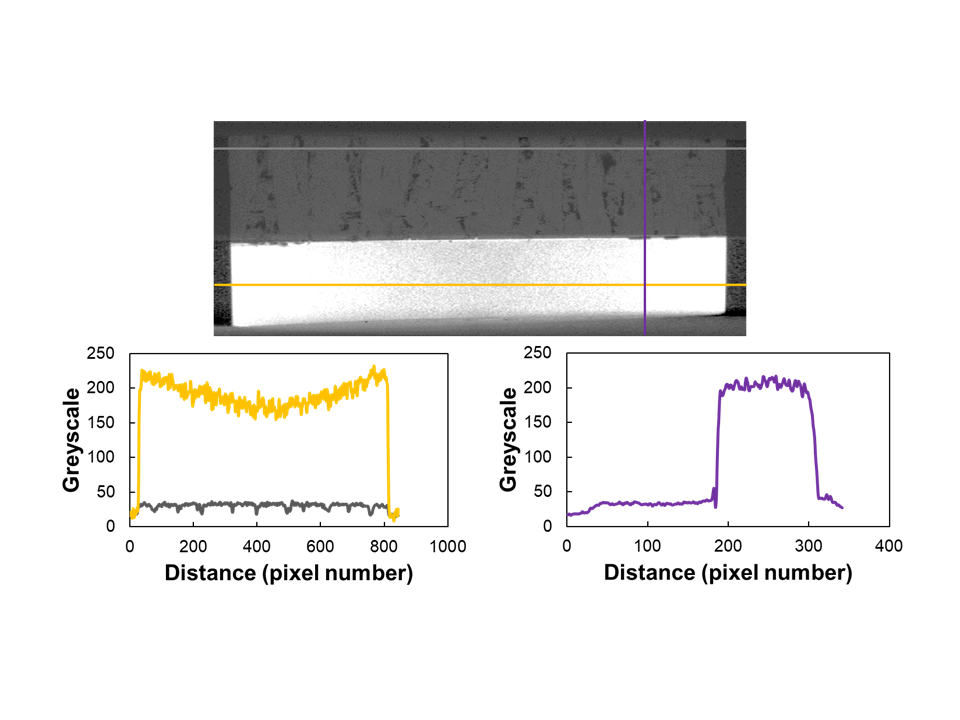

Full 3D volumetric images are data-rich and, depending on the imaging method, can include features of interest that are less than 1/1000th the size of the parent sample. The images typically consist of a discretised voxel domain, with each voxel (3D pixel) providing some information about that location in space. For example, in X-ray CT, a voxel provides information about signal attenuation at that location which can be used to infer material density. In image format, the attenuation is visualised by being assigned a given colour or grey scale. This can be visualised with volume rendering or with 2D images as cross-sectional slices through the part.

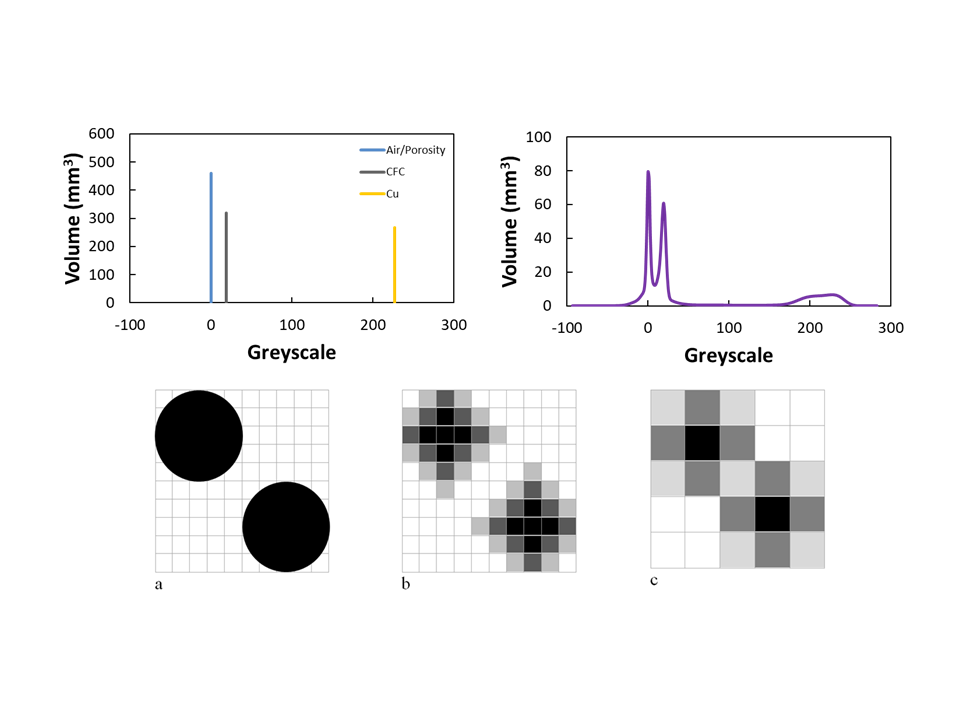

In order to define the 3D geometry, each voxel must be assigned to a particular phase or material in a process called segmentation (also known as labelling). The challenge in processing 3D images is to accurately define the phase boundaries due to the discretisation of space. For example, the component edge may partially cross a voxel, leading to the greyscale value of that voxel being partially comprised of background (air) and sample. This would mean that the greyscale value for this voxel will differ from that of the bulk of the component. A different ratio of background and sample would lead to yet a different greyscale value. The resolution of the image will impact this effect due to the fact that a cuboid with a single value is being used to homogenise and represent what may be a complex geometry. In addition to this, effects such as noise and numerical artefacts will impact the accuracy of the segmentation process.

A segmented volumetric image will still consist of a voxelised domain, but with each voxel having a phase number rather than greyscale value. For example, a segmented image of an orange fruit may consist of:

- two phases: background, fruit

- three phases: background, peel, interior

- four phases: background, peel, flesh, seeds

- many more phases up to what is resolvable with the available image resolution

Many segmentation approaches and software exist from fully manual voxel ‘painting’ on a slice by slice basis to semi and fully automated methods assisted by image processing algorithms. In practice, more complex images (i.e. sample geometry, number of phases, level of noise and artefacts) tend to require more manual interaction.

Preparation of geometry into simulation ready format

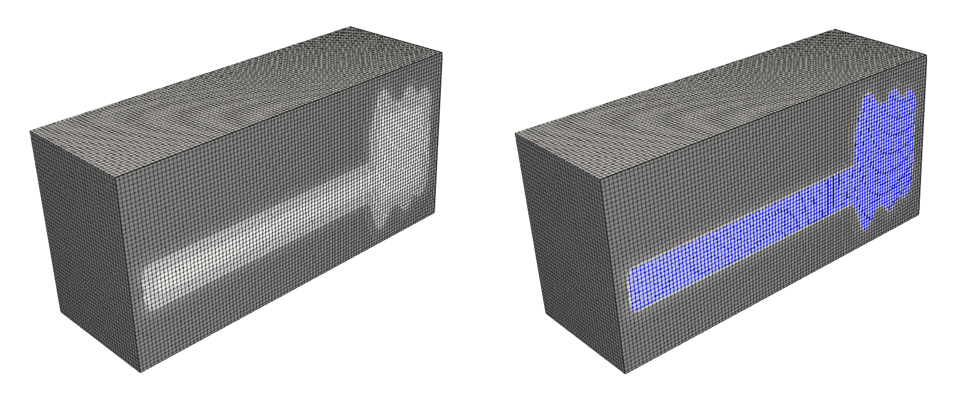

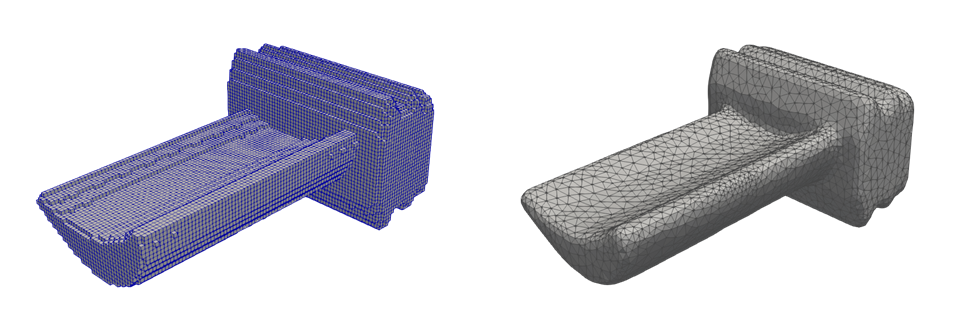

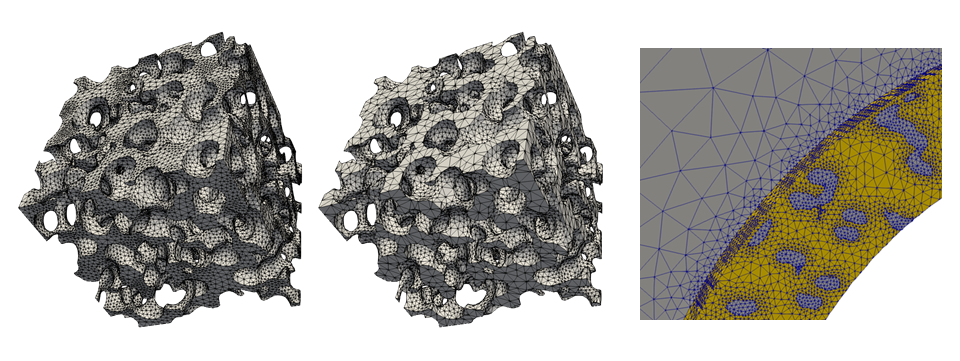

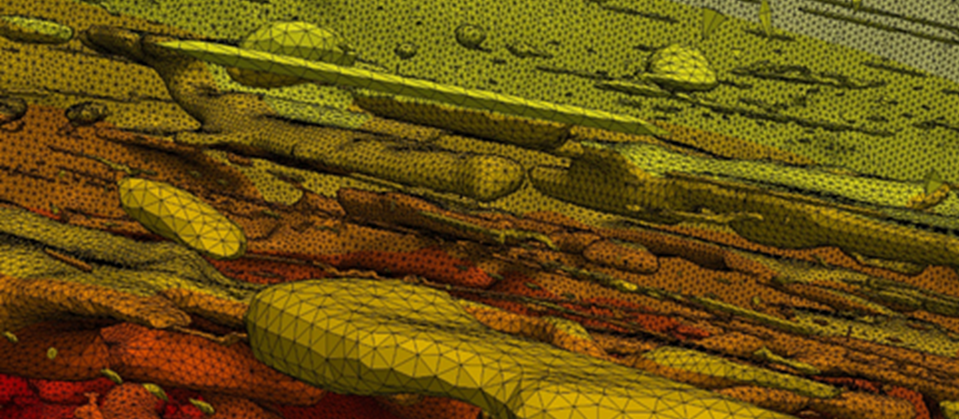

Once a voxel geometry has been defined, it is possible to perform simulation analysis on this data. This is often the approach of meshless methods and ‘first pass’ or ‘rapid turnaround’ mesh-based methods by using the voxels as hexahedral elements. For more in-depth analysis with mesh-based methods, such as FEA and CFD, it is usually desirable to perform some preparatory steps.

Smoothing

To move from a voxelised geometry to one which is a truer representation real world shapes image smoothing can be applied.

The voxelised nature of the geometry in its current form is a consequence of the imaging data format and discretisation of space. This can lead to numerical artefacts in the simulation analysis due to unrealistic discontinuities (i.e. the sharp edges on the surfaces due to the voxels). To address this and better represent the ‘real’ geometry, smoothing is applied. A careful approach must be taken to apply enough smoothing to approximate curved surfaces but not to such a level that leads to loss of detail. The precise technique used will depend on the dataset and the meshing software used.

Resolution

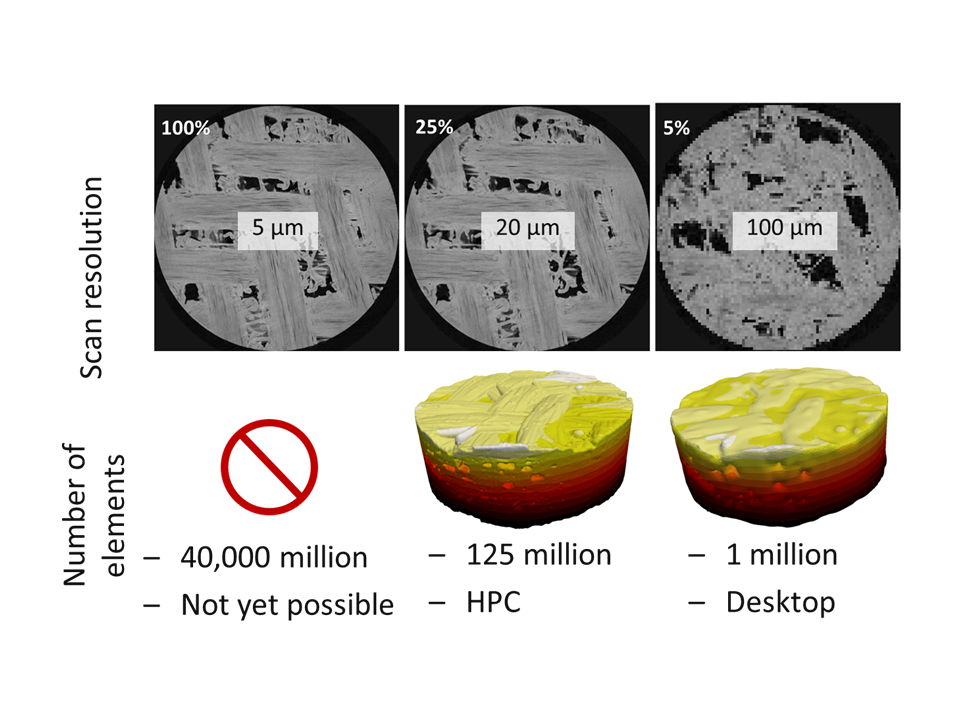

Image resolution is important in realising the potential of IBSim to improve simulation accuracy.

The resolution of model geometries are inextricably linked to the image resolution. As such, small features of interest will only be included in the simulation if they are resolvable in the images. Some post-processing may be applied to improve images, but this is no replacement for following ‘good’ imaging technique.

In order to capture small-scale features, relative to the global dimensions, IBSim meshes tend to be significantly more detailed than their CAD counterparts. For mesh-based simulations (e.g. FEA) this means a significant increase in the number of elements and thus computational expense.

For example, a typical industrial micro XCT scanner produces images which consist of 2,000 x 2,000 x 2,000 voxels. If meshed at full resolution with tetrahedral elements, this would produce 40 billion elements. CAD based meshes are typically of the order of hundreds of thousands or a few million elements.

The two main approaches to reduce the element count are:

- Downsample the image data to a level which is still sufficient to accurately resolve features of interest without losing important detail.

- Perform local refinement of the mesh around features of interest and mesh coarsening elsewhere (as already utilised with CAD meshes).

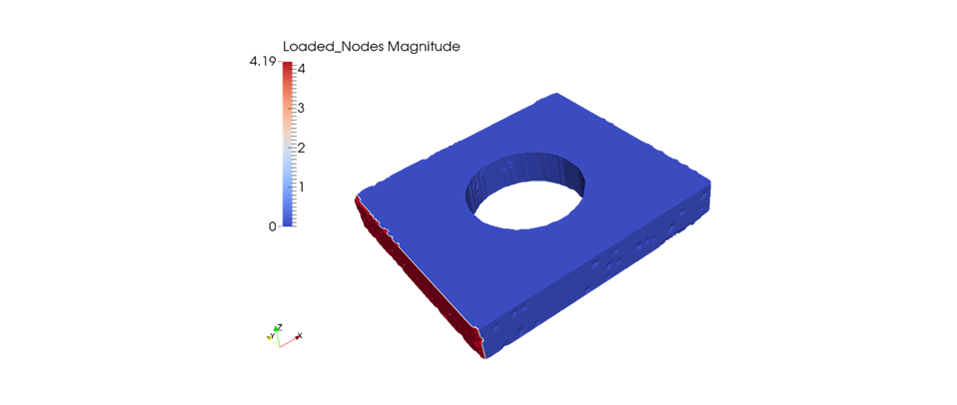

Node, edge, and surface sets:

IBSim meshes have truer representation of surfaces, faithfully describing undulations and curved rather than discretised (or stepped) edges. As such, they must be treated appropriately.

CAD based meshes are constructed from basic geometric shapes and as such tend to have easily defined points, edges, and surfaces. IBSim meshes often have complex surfaces with smoothed edges. This can make it challenging to defining regions to apply model loads and constraints. For example, an outer surface may be connected to a surface deep within the sample’s volume through open porosity. Many solution approaches are possible which usually require implementing during the segmentation and meshing stages rather than when preparing the analysis. With careful planning it is possible to reach the analysis stage and treat the IBSim model similarly to a CAD based equivalent.

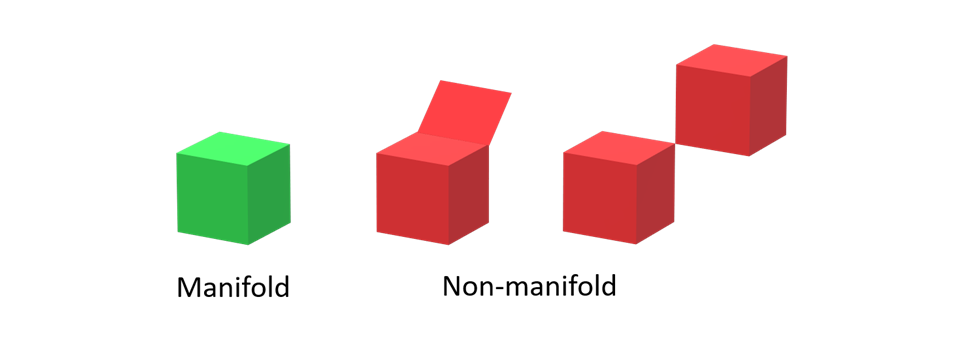

Mesh validity:

As with CAD-based simulations, mesh quality and validity impact the results. With IBSim, some additional considerations are needed.

In order that mesh-based simulations may complete without issue, it is important that the meshes produced are valid. Meshing and simulation software will often perform basics checks, e.g. the ‘quality‘ of elements and whether the mesh is manifold or not. However, this will not capture issues which are considered ‘valid’ but will produce computational errors.

One of the main scenarios is an ‘island’, that is, a small disconnected region of voxels. This could even be a single voxel within an image of 8 billion voxels which means it can be easily missed during preparation. Inclusion of these islands in the model mesh will likely lead to the analysis failing to complete.

Another thing that can lead to issues are relatively small-scale features or cavities within the sample volume. If these features are smaller than what can be demonstrated as resolvable within the image, then they cannot be confidently assumed to be a ‘real’ feature and not caused by noise or an artefact. As such, including them could significantly increase computational time with no additional accuracy in results.

Both these issues can be easily rectified during the segmentation stage.

Image-Based Simulation analysis and visualisation

IBSim models are data rich, giving unprecedented insight into localised fluctuation in behaviour due to micro-features as well as their global impact. High resolution visualisation allows researchers to investigate these in detail.

If the preparation of the image-based simulation mesh has been carried out effectively, the running of the actual FEA/CFD analysis should not differ significantly from a CAD-based simulation. There are still some considerations worth noting.

Because the volumes of data associated with IBSim are relatively large, this can make handling the files by following conventional approaches more challenging than usual. Firstly, users must ensure that the computer they’re using have the appropriate specifications, namely sufficient RAM and an adequate graphical processing unit (GPU). It is not possible to recommend precise hardware configurations because this will depend on the complexity of the model geometry in question. However, it has been demonstrated that the Nvidia Quadro line of graphics cards are particularly well suited for interactive visualisation and manipulation of highly detailed FEA and CFD models. Due to this demand on the hardware to visualise a high level of geometrical detail, use of the graphical user interface (GUI) on a desktop may be less responsive than users may be used to.

To facilitate carrying out the simulation it is recommended to plan a workflow that minimises use of the GUI, particularly operations that manually interact with the rendered model. The most highly recommended approach is to develop a workflow on a low resolution or CAD-based equivalent model which can be fully scripted, then replace the lower resolution mesh with the high resolution one using the same names to refer to parts of the model (e.g. locations of loads and constraints). Depending on the simulation software it may be possible to use this approach to initiate the computational solver without use of a GUI at all.

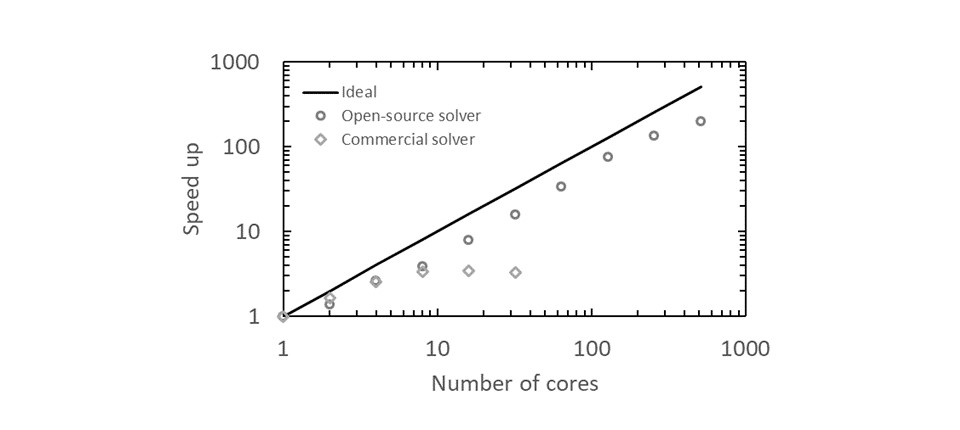

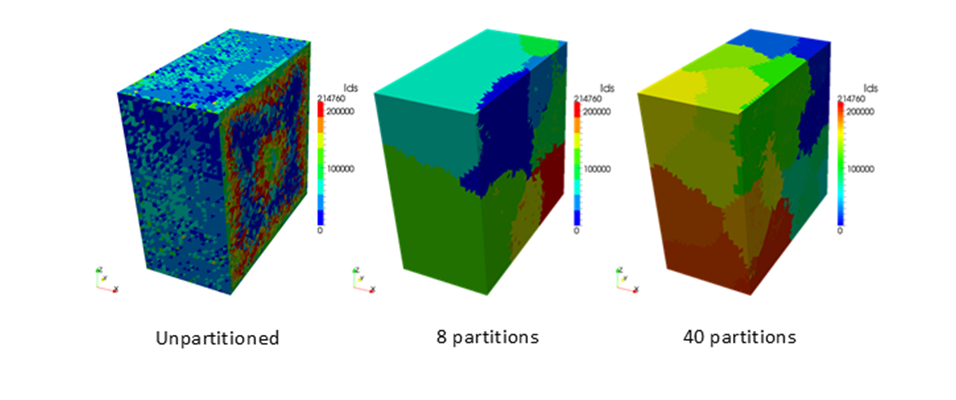

Depending on the level of detail required by an IBSim model, mesh-based approaches are likely to contain tens or even hundreds of millions of elements. Conventional commercial simulation packages aren’t currently well suited to such large meshes due to relatively poor scalability (i.e. how efficiently they use large number of computing cores). However, there are several less well-known simulation packages available which have been written specifically to optimise use of parallel computing or high-performance computing (HPC). We have successfully demonstrated the capability of performing simulations with meshes greater than one billion elements using an HPC system and that a mesh consisting of tens of millions of elements is well within the capability range of a modest computing cluster.

To further aid scalability of the solver, it is also worth optimising the mesh itself with a pre-processor. Some simulation software packages have this feature built in, whereas others require 3rd party codes. This is achieved by partitioning the mesh in such a way that minimises communications between computing processors (which is a known bottleneck in HPC) so that CPU cores spend a greater fraction of their time carrying out their intended function, i.e. computing.

To perform post-processing and visualisation of the results, it is recommended to do this in similar fashion to the setup stage, that is to minimise use of the GUI. Again, this is possible by preparing a workflow on a set of less geometrically detailed results then implementing this on the high-resolution data. However, visualisation software exists which has been developed specifically for large data volumes. The IBSim group at Swansea University make frequent use of ParaView by Kitware, which is open-source and is well suited for data of this type. The datasets which have been found to be most challenging are transient (i.e. time dependant) simulations. Creating animations of these types of datasets are particularly time consuming, sometimes this can take longer than the simulation stage. As such, using a scripted approach to compile the animation without the GUI can provide a significant time saving.